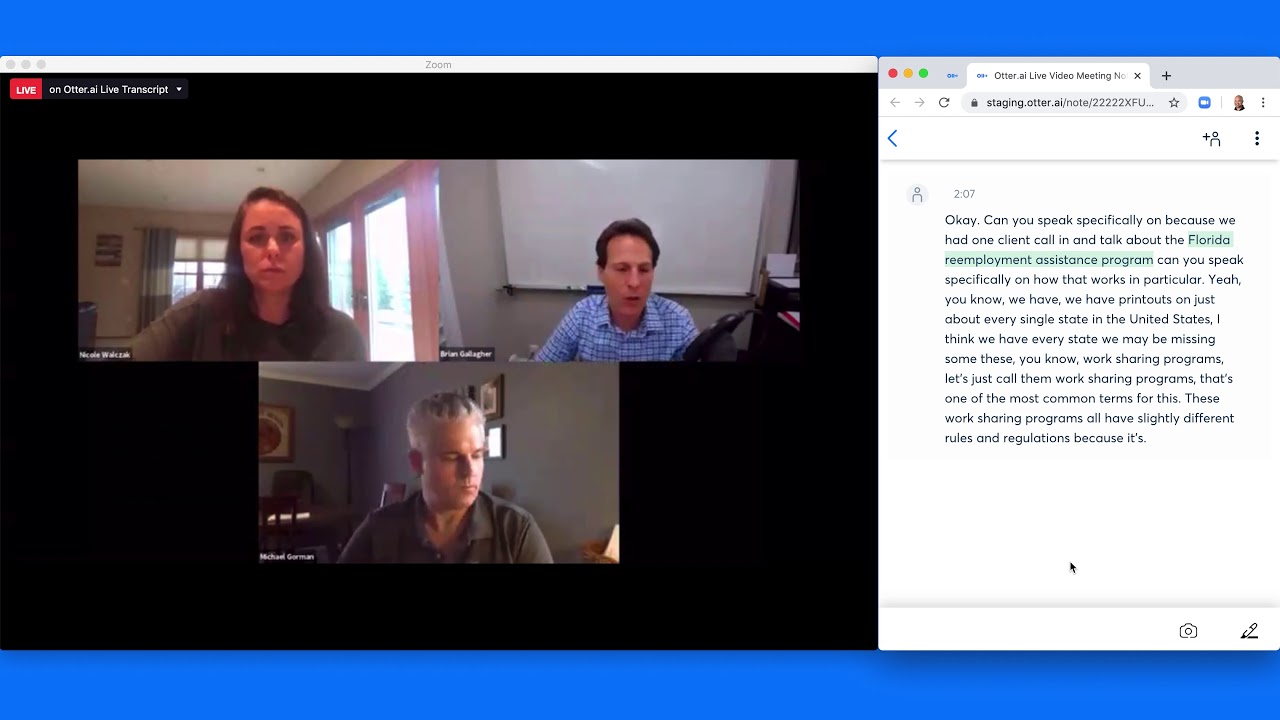

I present here some experimentation with the real-time speech-to-text transcription tools from Amazon and Google. A short video demo:

Transcription results from both streaming and batch processes, along with a clean, human-corrected transcript are attached here as text files:

Transcription Experiment - OGM call 2020-09-10 - Human-corrected Transcription.txt (1.4 KB)

Transcription Experiment - OGM call 2020-09-10 - AWS Transcribe Streaming.txt (1.3 KB)

Transcription Experiment - OGM call 2020-09-10 - Google Speech-to-Text Streaming.txt (1.3 KB)

Transcription Experiment - OGM call 2020-09-10 - AWS Transcribe Batch.txt (1.5 KB)

Transcription Experiment - OGM call 2020-09-10 - Google Speech-to-Text Batch.txt (1.2 KB)

Transcription Experiment - OGM call 2020-09-10 - Google Speech-to-Text Batch with Punctuation.txt (1.2 KB)

(Some data are left out, such as timing and alternative, lesser-confidence alternative transcriptions.)

Along with the practical rewards of transcribing OGM and similar calls, I think there are potential business opportunities here, and I am thinking about exploring them more seriously.

- The underlying machine transcription technology is “good enough” (while not yet perfect, which means there is opportunity).

- There are not many vendors offering productized versions of the technologies (biggest are probably Otter and Descript).

- There are a number of potential models for productization that haven’t been explored.

Some potential products or services:

- Transcribe virtual calls or physical meetings (the latter subject to pandemic precautions).

- Real-time machine transcription into a collaborative text editor, where one or more humans could clean up and improve the transcription. The result could be near-live and near-perfect transcription.

- Offer the above as a product, or offer the above as a service.

- Use the cleaned-up near-live transcription as input for graphic facilitators, narrative facilitators, or highlight facilitators, who would feed images, narrative structure, or highlights back into the meeting.

- Use the above to offer the ability for someone to attend to multiple calls simultaneously, as they multitask but keep up on each call’s written transcript.

- Use machine transcription to recognize key phrases, then have a bot look up those phrase in Google, Jerry’s Brain, etc., as Jerry suggested recently on the OGM mailing list.

Anyway, for this experiment, I played my check-in from the most recent OGM check-in call from YouTube, simulating a live Zoom call, and ran the audio into both Amazon and Google transcription in streaming (real-time) mode. I also ran the whole call through batch transcription with both Amazon and Google.

The transcription starts with the end of Tony’s check-in, then Jerry switching over to me, then my check-in, then Jerry switching over to Avril. My voice and speech quality is okay but not great - volume varies, and there are a number of filler words (um, uh) to navigate.

All of the machine transcriptions are good, but not perfect. Some quick observations:

- This is a technology demo, of course, not a product yet. But it still shows great promise.

- The Google transcription colors are red for “draft” transcription, and green for a completed chunk. The distracting duplication of lines is an artifact of the library I’m using, which can handle one draft line of text, but duplicates lines when there is more than one draft line of text.

- Google’s streaming latency is really good – almost real-time. (This is similar to what I see on my Pixel smartphone with Google’s “Live Caption” feature, by the way.)

- Amazon’s streaming latency is a little challenging – it sometimes takes seconds to catch up. I am guessing it’s not the backend, but rather that library I’m using to transfer the audio to the cloud, and it might be possible to get low latency with Amazon streaming, too.

- Punctuation is really nice. The default for Google is “no punctuation”, but you can enable punctuation for Google, which I did with one Google batch sample. I missed enabling it for the streaming sample.

- Less-common words and names are generally harder for the machines to recognize. This would make it hard to do the auto-look-up bot work without human assistance.

I am expecting to continue to play around with these technologies – let me know if you’d like to be involved.